Software Modernization ROI: A Defensible Calculation Guide

Most software modernization ROI models are fiction with spreadsheets.

They assume the implementation lands on time, the migration path is technically sound, the organization can absorb the change, and the business benefits appear on schedule. That’s how teams end up approving a program with a clean payback chart and then spending the next year explaining why costs rose before benefits arrived.

The market data already tells you the problem. In a 2025 survey of over 500 U.S.-based IT professionals, 62% of organizations still rely on legacy software, and 25% cite lack of clear ROI as a top barrier. I don’t read that as hesitation. I read it as a sign that many teams still model ROI in ways that don’t survive executive scrutiny.

A defensible software modernization ROI case has to do three things at once. It must capture the full cost base, quantify returns beyond infrastructure savings, and discount the whole model for execution risk. If you skip any one of those, your business case is optimistic at best and misleading at worst.

Why Your Current ROI Model Is Dangerously Incomplete

Many organizations still calculate software modernization ROI as a simple ratio of expected savings over project cost. That formula is fine for a server refresh. It breaks down fast when you’re changing architecture, delivery workflows, data movement, security controls, and operating model at the same time.

The first flaw is that naive models treat implementation as deterministic. They assume the migration completes, the cutover works, and the team adopts the new platform. Reality is messier. Even before you get to failure rates, most organizations struggle to produce a clean line between current-state costs and future-state value.

The second flaw is that teams often isolate only visible spend. They count vendor fees, cloud bills, and licenses, then ignore developer friction, parallel-run overhead, defect remediation, and the operating burden of carrying old and new systems together. Those omissions don’t make the costs disappear. They just move them off the slide deck.

The vendor pitch problem

Vendor ROI models usually start from a best-case endpoint. They assume the target architecture delivers as designed and that each claimed gain flows straight into the P&L. That’s useful for a sales cycle. It isn’t useful for capital allocation.

Practical rule: If the model can’t explain what happens when the program slips, stalls, or lands only partially, it isn’t an ROI model. It’s a scenario sketch.

What a defensible model includes

A modernization business case becomes credible when it includes:

- True investment cost: not just build cost, but disruption cost, remediation cost, and transition cost.

- Multi-layer returns: direct savings, operational risk reduction, and strategic enablement.

- Probability weighting: expected value after accounting for non-completion, underperformance, and scope distortion.

- Decision thresholds: conditions under which the organization should pause, phase, or not modernize at all.

That last point matters. Some systems should be modernized now. Some should be ring-fenced and retained. Some should be retired instead of rebuilt. An ROI model that always ends in “proceed” isn’t analysis.

Baseline Your True Costs Not Just the Obvious Ones

The denominator in software modernization ROI is where most bad models start. Teams gather implementation estimates, add contingency, and call it investment. That misses the larger economic question. What does the organization spend to keep the legacy estate alive today, and what new costs will the transition introduce before the target state stabilizes?

The best starting point is a current-state baseline across several months. Capture deployment friction, change lead time, production incident patterns, defect escape, infrastructure spend, contractor dependence, and the amount of engineering capacity consumed by maintenance instead of delivery. That gives you something most ROI decks lack: a reference point that can survive audit.

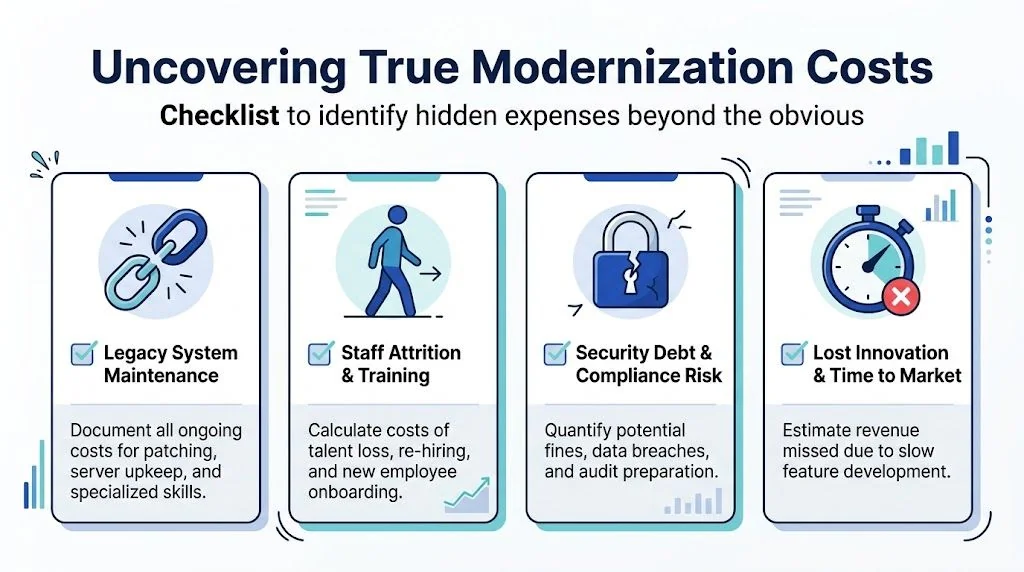

Hidden costs that change the denominator

HTEC’s analysis of software ROI and hidden costs is one of the more useful reality checks. It notes that technical debt can inflate maintenance costs by up to 50%, that modernization paths like COBOL migrations can cost $1.50-$4.00 per LOC, and that 84% of integration failures cost over $2.5M per project. Those figures matter because they expose how quickly “reasonable” budget assumptions break under legacy complexity.

A serious cost model should include more than project labor and infrastructure. It should account for the following categories.

- Legacy maintenance drag: patching, unsupported dependencies, specialist contractors, and the engineering hours spent preserving brittle workflows.

- Parallel operations: the period where old and new environments both need support, monitoring, reconciliation, and operational oversight.

- Integration rework: interface fixes, data mapping defects, batch-processing surprises, and downstream system coordination.

- People costs: retraining, backfilling, attrition risk, and the productivity dip that comes with toolchain and workflow change.

- Control overhead: testing expansion, governance checkpoints, security review, and compliance validation.

One practical way to frame this is through a total cost of ownership lens. A well-structured legacy software total cost of ownership analysis forces teams to document what they’re already paying to preserve a system that appears “cheap” only because its real costs are distributed across departments.

Cost categories that executives usually miss

Executives often underestimate transition friction because it doesn’t show up as a line item in a vendor proposal. Engineering leaders need to name it explicitly.

| Cost area | What to measure | Why it matters |

|---|---|---|

| Current-state maintenance | Support effort, patch cycles, specialist dependency | Shows the cost of staying put |

| Delivery drag | Time spent navigating brittle code and release constraints | Converts engineering friction into economic cost |

| Migration execution | Build, testing, refactoring, data movement, cutover prep | Core program budget |

| Coexistence period | Dual operations, reconciliations, duplicated monitoring | Temporary, but often material |

| Failure remediation | Rework, rollback effort, incident response, defect correction | Protects against false optimism |

A baseline without these categories produces a distorted denominator. Then the return side has to work harder to justify a project that was never properly costed.

Here’s a practical walkthrough that can help teams think through modernization economics before they lock assumptions:

Treat every “one-time” migration cost with suspicion. Legacy transformations create temporary complexity before they remove permanent complexity.

A field-tested cost checklist

Use this checklist before you finalize the investment case:

- Document current operating burden across engineering, ops, security, and support.

- Identify migration-path-specific pricing drivers such as LOC-heavy conversion work, interface count, data complexity, and test coverage gaps.

- Model the coexistence window instead of pretending cutover happens in a single clean event.

- Reserve budget for integration failure and rework where dependencies are poorly understood.

- Separate mandatory spend from optional optimization so executives can distinguish “must-have modernization” from “nice-to-have refactoring.”

If you do this well, the project may look more expensive on paper. That’s good. A business case that survives contact with reality is worth more than an attractive estimate that collapses in quarter two.

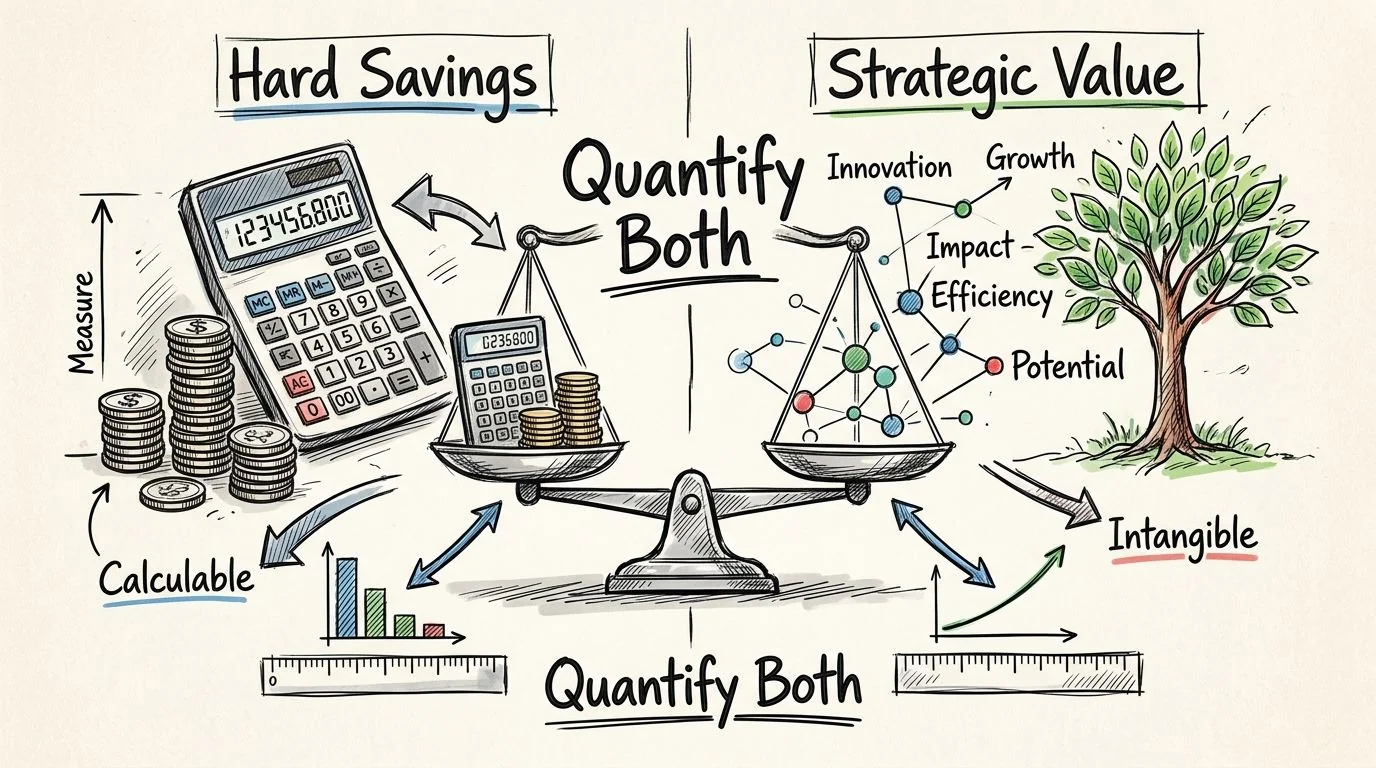

Quantify Both Hard Savings and Strategic Value

Once the denominator is honest, the numerator needs the same discipline. Most software modernization ROI cases either undersell the upside by counting only infrastructure savings, or oversell it by throwing vague strategic language around without assigning any economic logic. Both approaches fail in executive review.

The right move is to split returns into distinct value streams and model each on its own terms.

Hard savings belong in the base case

Direct savings are the easiest to defend because they map cleanly to existing spend. Infrastructure consolidation, license reduction, lower support burden, and less manual operational work should form the core return case.

American Chase’s review of data modernization strategies provides a useful benchmark set here. It states that thorough modernization strategies can achieve 200-400% ROI within 2-3 years, driven by 40% improved operational efficiency and a 60% reduction in manual processing time. I wouldn’t treat that as a plug-and-play forecast for your environment. I would treat it as proof that operational uplift belongs in the model when you can trace it to actual workflows.

A disciplined way to do that is to connect each claimed benefit to a baseline metric and an owner. If a team expects less manual processing, identify where that work happens today, who performs it, and whether the gain reduces cost, increases capacity, or improves control. Those outcomes are different and should not be mixed.

A companion resource on technical debt calculation formulas can help engineering leaders convert code and architecture drag into business-facing cost language rather than abstract “developer pain.”

Strategic value needs structure, not hand-waving

Strategic benefits are real. They’re also where weak business cases go soft. Faster release cycles, better reliability, improved security posture, and higher adaptability matter. But if you present them as slogans, finance will discount them to zero.

Use three buckets.

- Risk reduction: fewer outages, fewer severe defects, better auditability, stronger security controls.

- Capacity creation: engineers spend less time on maintenance and more on roadmap work.

- Business enablement: the platform can support product, channel, or integration moves the legacy estate constrains today.

Don’t label value as “intangible” when the business already measures the underlying pain. Downtime, delay, and operational friction all have owners and costs.

A return model that executives can follow

| Value stream | Example evidence | How to express it |

|---|---|---|

| Direct cost reduction | Lower maintenance effort, less manual work | Annual run-rate savings |

| Risk reduction | Stronger controls, more stable operations | Avoided loss exposure or protected margin |

| Delivery acceleration | Faster engineering throughput | Capacity released for roadmap priorities |

| Strategic flexibility | Easier integration and platform evolution | Option value tied to specific business initiatives |

The mistake I see most often is double counting. Teams claim lower maintenance cost, higher productivity, faster delivery, and strategic agility, then map all of them to the same engineering hours. Don’t do that. Pick one monetization path for each benefit.

What belongs in the upside case

Base case returns should be concrete and near-term. Upside case returns can include broader strategic effects, but only if they’re tied to an explicit dependency such as a product launch, partner integration, or risk-control requirement.

That distinction matters. It keeps your software modernization ROI model grounded while still acknowledging that modernization is not just a cost-reduction exercise. In strong cases, the core value is often that the company can execute decisions the legacy platform keeps delaying.

Apply Financial Models Beyond Simple Payback

Simple payback is attractive because it’s easy to explain. It also hides too much. Modernization programs usually incur costs early and realize benefits unevenly over time. A model that asks only “how fast do we recover spend?” misses the timing, durability, and concentration of returns.

A better approach is to build a multi-year cash flow and view the program through three lenses: payback period, net present value, and internal rate of return.

What each metric actually tells you

Payback period answers a narrow question. When does cumulative benefit exceed cumulative cost? It’s useful for liquidity discipline, especially if the business is sequencing several major investments.

Net present value is the stronger decision tool. It discounts future cash flows so a benefit realized later is worth less than the same benefit realized sooner. That matters in modernization because delays, ramp periods, and phased releases are normal.

Internal rate of return helps compare this investment with other uses of capital. For technical leaders, its value is less about finance jargon and more about forcing consistency. If the implied return depends on heroic timing assumptions, the model will expose it.

Build the cash flow the way the program will actually unfold

Use period-by-period cash flows rather than a single blended ROI percentage. That means modeling:

- Initial outflows: discovery, architecture work, partner costs, platform setup, testing expansion

- Transition effects: overlap costs, training, productivity dip, temporary duplication

- Stabilized benefits: lower run costs, reduced toil, fewer incidents, faster delivery once the target state settles

- Residual obligations: decommissioning work, deferred integrations, final cleanup

A modernization program rarely produces clean linear gains. Cash flow improves in steps, not smooth curves.

A practical finance view for engineering leaders

| Metric | Good for | Weakness |

|---|---|---|

| Payback period | Quick screen for capital recovery | Ignores value after payback and timing of later gains |

| Net present value | Core investment decision | Sensitive to poor cash flow assumptions |

| Internal rate of return | Comparing alternative investments | Can look precise even when assumptions are shaky |

The operational lesson is simple. If your business case only shows payback, leadership will ask the missing questions anyway. They’ll want to know what benefits arrive when, what happens if adoption lags, and whether the long-tail returns justify the disruption.

What CFOs usually challenge

Finance leaders tend to focus on four issues:

- Timing realism: when benefits start, not when the project team hopes they start.

- Attribution: whether savings leave the cost base or just free up effort.

- Durability: whether the gains persist after the first year.

- Alternatives: whether a smaller or phased intervention produces a better return profile.

If your model can answer those four questions, you’ve moved beyond a technical justification and into an investable business case.

Risk-Adjust Your ROI with Failure Rate Data

Here, most software modernization ROI models break.

Teams build a careful cost baseline. They estimate benefits with reasonable discipline. They produce payback, NPV, and IRR. Then they stop as if the only remaining variable is execution quality. That ignores the central fact of modernization economics: projected value is not the same as expected value.

The missing step is to discount returns for the probability that the transformation underdelivers or fails outright.

Phoenix Strategy Group’s analysis of IT modernization ROI points to the uncomfortable number many business cases avoid: up to 67% of complex migrations fail due to technical pitfalls. That single adjustment can invert the investment logic. A project that looks excellent in a naive model can become marginal or negative once failure probability enters the equation.

Expected value beats optimistic value

A clean way to model this is to build three scenarios:

- Success case: the modernization lands as designed and benefits arrive broadly as planned.

- Partial-value case: the system goes live, but scope reduction, delays, or adoption gaps shrink the return.

- Failure case: the program is abandoned, rolled back, or lands in a technically unstable form that destroys most of the expected economics.

Then apply probabilities to each scenario and calculate expected value across the set. That gives executives something far more useful than a single ROI number. It gives them a range and a decision surface.

If you need help structuring the forecast, this guide to building a powerful DCF model in Excel is a practical reference for translating scenario-based cash flows into a finance-ready model.

Naive ROI vs risk-adjusted ROI example

| Metric | Naive Calculation | Risk-Adjusted Calculation | Comment |

|---|---|---|---|

| Investment | Full project cost only | Full project cost plus disruption and remediation scenarios | Naive models usually understate downside exposure |

| Benefits | Assumes full planned savings and strategic gains | Weights success, partial-value, and failure outcomes | Expected value is lower than headline value |

| Timing | Benefits start on planned date | Benefits stagger based on delivery uncertainty | Delays reduce present value |

| Decision output | Single positive ROI figure | Range of outcomes with threshold conditions | Better fit for executive approval |

The insight often overlooked is that risk adjustment doesn’t just reduce the upside. It changes which modernization path is rational. A full rewrite and a phased replatform can show similar headline returns, but once you weight them for execution risk, the smaller-step option often has the better expected value.

When not to modernize

A serious model has to allow for “no” as a valid conclusion.

Don’t modernize yet when the current platform is operationally stable, technical debt is containable, the business case depends mostly on speculative strategic upside, and the migration path carries outsized technical ambiguity. In those cases, you’re not deferring value. You’re avoiding value destruction.

This is also where decision support tools matter. Platforms such as Modernization Intel aggregate failure analysis, cost transparency, and partner specialization data across migration paths, which helps teams challenge assumptions before they lock into a program structure. That’s useful not because it guarantees success, but because it makes risk visible earlier.

If the expected value goes negative after you price failure risk, the right answer isn’t “improve the slide.” It’s “change the scope, phase, or decision.”

A better decision rule

Use this sequence:

- Build the conventional financial model.

- Define explicit failure and partial-success scenarios.

- Apply probability weights grounded in path complexity.

- Compare modernization options on expected value, not optimistic ROI.

- Set a stop condition before approval.

That last step is where governance gets serious. If the program crosses a cost threshold, misses a readiness gate, or fails a pilot objective, leadership should already know whether the plan shifts, pauses, or ends. Without that rule, risk-adjusted ROI becomes an academic exercise rather than an operating discipline.

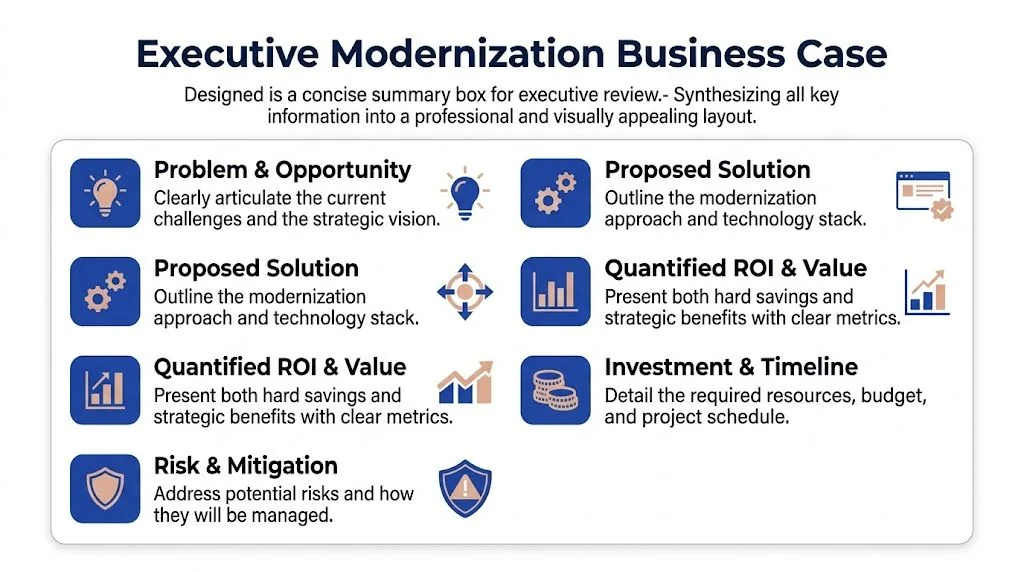

Build the Executive-Ready Modernization Business Case

An executive team doesn’t need a fifty-page architecture narrative. It needs a decision document. The strongest modernization cases I’ve seen fit on one page at the front, with detailed appendices behind it. That front page carries the recommendation, the financial logic, the key assumptions, and the conditions under which the organization should proceed or stop.

The need for that rigor is obvious in Particle41’s guidance on measuring application modernization ROI. It notes that only 35% of digital transformations fully succeed due to poor KPI alignment and that successful projects tie outcomes to business targets such as 30-50% maintenance cost reduction or a 14% revenue boost, validated against baselines. That’s the standard. If your KPIs don’t connect to business outcomes, your executive case is incomplete before the meeting starts.

What the one-page summary should contain

A credible executive summary has five parts.

| Component | What leadership needs to see |

|---|---|

| Problem statement | Why the current platform creates material cost, risk, or delivery constraints |

| Proposed path | The modernization approach and why this path beats alternatives |

| Financial case | Risk-adjusted economics, not just a headline ROI |

| Key assumptions | What must be true for the value case to hold |

| Decision gates | When to continue, phase, pause, or stop |

What shouldn’t dominate the page is architecture detail. Executives care about architecture only insofar as it affects cost, risk, speed, and strategic flexibility.

The narrative that earns trust

The most credible business cases do something many teams avoid. They include the reasons not to proceed.

That doesn’t weaken the argument. It strengthens it. When you show the conditions under which modernization destroys value, leadership sees that the recommendation is analytical, not predetermined.

Use language like this:

- Proceed now when baseline costs are structurally high, the current platform blocks important delivery outcomes, and phased execution contains technical risk.

- Proceed in stages when the end-state is sound but dependency complexity makes full-scope execution too fragile.

- Hold and contain when the current system remains viable and the modeled upside depends mostly on uncertain strategic assumptions.

- Retire instead of modernize when the business capability itself is shrinking or can be replaced more cleanly than it can be rebuilt.

A resource such as Legacy Application Modernization Services can be useful as an implementation reference when leadership asks what execution support may look like, but it belongs after the decision logic, not in place of it.

Final executive checklist

Before you send the memo upstairs, verify these points:

- Every claimed benefit has a baseline owner.

- The model shows timing by period, not a single blended ROI number.

- Failure and partial-success scenarios are explicit.

- Decision thresholds are written, not implied.

- The document includes a valid “do not modernize yet” outcome.

Executives don’t fund modernization because the architecture is cleaner. They fund it because the decision case is stronger than the alternatives.

That’s the standard for software modernization ROI. Not enthusiasm. Not industry averages in isolation. A defensible model that prices actual costs, values actual returns, and admits that failure risk is part of the economics, not an afterthought.

If you’re preparing a modernization business case now, start by auditing your current model for three omissions: hidden transition costs, unstructured strategic benefits, and zero probability weighting. Fix those first. Most ROI cases don’t fail because the math is hard. They fail because the assumptions are too flattering to survive review.