The Realist's Guide to Mainframe DevOps Integration

Mainframe DevOps integration is not a technical project; it’s a business survival strategy. For decades, the mainframe was the untouchable core—stable, reliable, and slow. That is now a competitive liability. The problem is connecting your most critical systems to the high-speed, agile world your customers live in. This guide outlines a direct, evidence-based path to bridging the chasm between your fast-moving distributed teams and the core systems that still run most of the global economy, unlocking faster feature releases without sacrificing the mainframe’s famous stability.

Why Stalling on Mainframe Integration Is No Longer an Option

Delaying mainframe DevOps integration is a direct path to falling behind competitors and failing to meet regulatory demands. The decision is not if you should integrate, but how fast you can do it without breaking the business-critical services running on your System z or z/OS infrastructure.

The core problem is a two-speed IT organization. While cloud-native teams push code daily using CI/CD pipelines, mainframe teams are often stuck in quarterly release cycles, bogged down by manual handoffs. This friction doesn’t just slow you down; it cripples your ability to deliver a unified customer experience.

The Growing Chasm Between Speed and Stability

Mainframe workload is growing, not shrinking. The challenge is making it agile enough to keep pace. Without a clear integration strategy, your organization is exposed to significant and escalating business risks:

- Slow Time-to-Market: When a new mobile app feature needs a change to a backend COBOL program, the entire project moves at the speed of the mainframe release cycle. This delay means missing market windows and ceding ground to more nimble competitors. A typical 90-day mainframe release cycle makes real-time market response impossible.

- Increased Operational Risk: Manual deployment processes are a breeding ground for human error. A single mistake in a JCL promotion can trigger costly downtime, directly hitting revenue and eroding customer trust. One fat-finger error can take down a system that processes millions of transactions a day.

- Regulatory Compliance Failures: Mandates like the EU’s Digital Operational Resilience Act (DORA) demand extreme operational resilience and bulletproof auditability. For financial institutions, outages can lead to fines of up to 1% of their global annual turnover. Manual processes cannot provide the automated audit trails and resilience needed to satisfy these requirements.

Every CTO must preserve the mainframe’s 99.999% availability while adopting the development velocity of a cloud-native startup. Mainframe DevOps integration is the only proven path to resolving this conflict.

The market data confirms the urgency. Industry analysis projects the overall mainframe market will grow from USD 5.65 billion to USD 7.54 billion by 2031. According to Mordor Intelligence, revenue from services—which includes modernization and DevOps tooling—is growing at 9.08% annually, far outpacing the hardware market itself. This is not speculative investment; market leaders are aggressively pouring capital into making their mainframes faster and fully integrated. By ignoring this trend, you are actively choosing to become less competitive.

To learn more about how this fits into a broader strategy, check out our guide on mainframe modernization. The only question left is whether you will lead this change or be left behind by it.

Actionable Framework for a Hybrid Mainframe CI/CD Pipeline

Moving from theory to practice requires specific, battle-tested technical patterns. The goal is not to force a cloud-native pipeline onto z/OS—that always fails. The only winning move is a hybrid architecture that respects the mainframe’s strengths while injecting modern speed and visibility. The first, and most painful, step is migrating your COBOL, PL/I, and JCL assets from legacy Source Code Managers (SCMs) like Endevor or ChangeMan ZMF into a modern SCM like Git. This establishes a single source of truth, a non-negotiable prerequisite for any serious DevOps work.

Decision Matrix: Initiating Mainframe Source Code Migration

Moving to Git is the critical first step. Use this matrix to assess readiness and identify blockers. A “No” in any of the first three criteria signals a high risk of project failure.

| Criterion | Question | Yes | No (Action Required) |

|---|---|---|---|

| Tooling Readiness | Is a modern Git-based SCM (e.g., GitHub, GitLab) licensed and available? | Proceed with technical planning. | Procure and provision a modern SCM immediately. |

| Team Skills | Have mainframe developers received foundational Git training (branching, PRs)? | Begin pilot project migration. | Schedule mandatory Git workshops. Project is blocked until complete. |

| Executive Mandate | Is there a clear directive from leadership to decommission the legacy SCM? | Lock legacy SCM in read-only mode post-migration. | Secure executive sponsor to mandate the cutover. Without this, adoption will fail. |

| History Migration | Is preserving decades of change history a hard requirement? | Plan for a complex, tool-assisted history migration. | Agree on a cut-off date. Migrate only current source and archive the rest. |

Building the Hybrid Pipeline Architecture

Once all code resides in Git, you can build the pipeline. The architecture is inherently hybrid, with an orchestrator like Jenkins, GitLab CI, or GitHub Actions acting as the central nervous system. Critically, this orchestrator does not run builds on the mainframe. It delegates.

- Commit and Trigger: A developer pushes a change to a COBOL program in their Git repository.

- Orchestration: A webhook triggers a job in your central orchestrator (e.g., Jenkins).

- Delegation: The Jenkins pipeline executes a step that calls a z/OS agent. This agent uses a tool like IBM’s Dependency Based Build (DBB) to securely pull the updated source code from Git.

- Native Build & Test: On the z/OS LPAR, DBB invokes the COBOL compiler, runs the link-edit, and executes automated unit tests with a framework like zUnit. This happens in the native environment, ensuring a valid build.

- Feedback Loop: Results—pass or fail—are sent back to Jenkins, which either halts the pipeline or proceeds.

This process transforms the opaque mainframe build into a transparent, automated step in a modern pipeline. You get speed and visibility without compromising the integrity of the z/OS environment.

Enabling Parallel Development with APIs

A common bottleneck is a distributed application team waiting on a quarterly mainframe release for a small logic change. This sequential dependency kills velocity. The fix is to proactively expose core mainframe business functions as REST APIs.

A robust API layer decouples your distributed and mainframe development streams. It allows front-end and microservices teams to develop against stable, mocked API endpoints while the mainframe team works in parallel to implement the underlying COBOL or PL/I logic.

This converts sequential blockers into parallel workstreams. Getting this right depends on strong system integrations that bridge these different worlds.

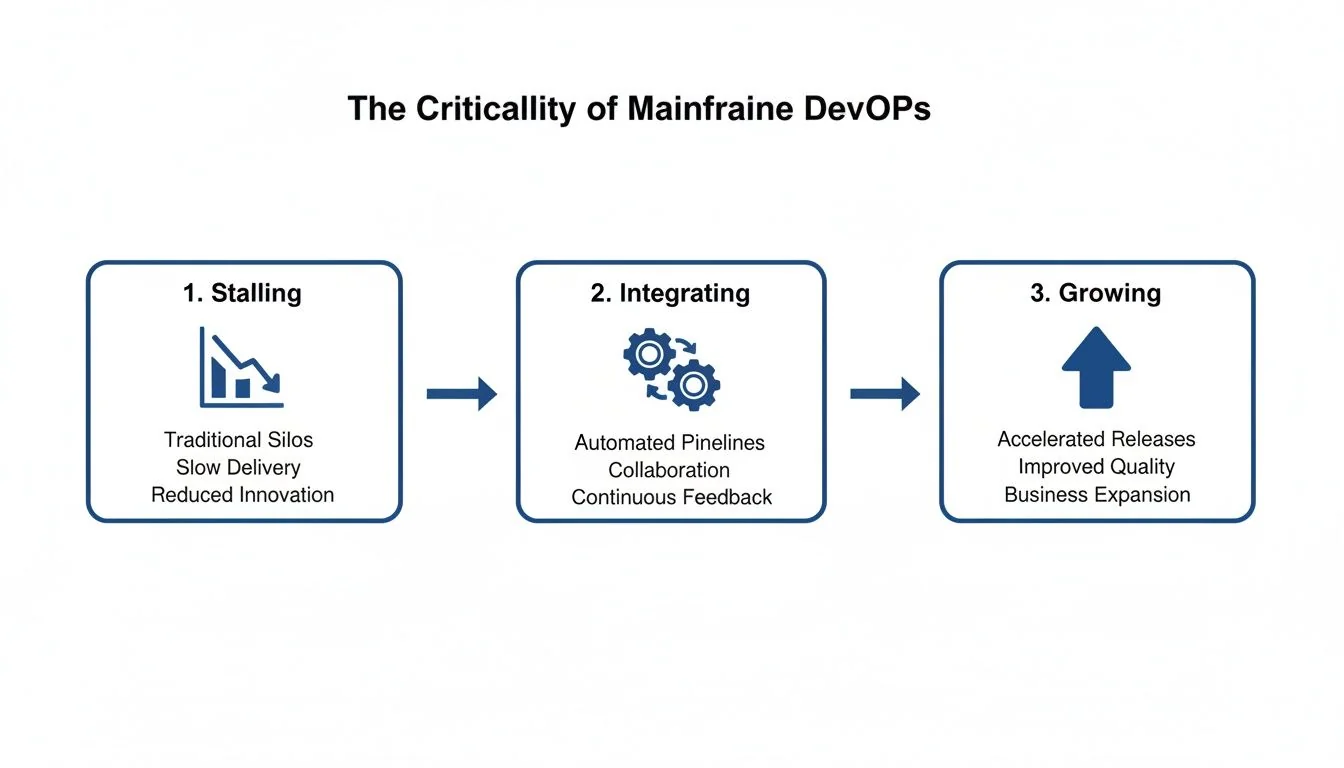

This diagram shows the journey from a siloed, stagnant state to an integrated, growth-focused one.

Without integration, the mainframe delivery process will inevitably stall. By connecting it to modern practices, you align it with the rest of the business and enable real growth. The pipeline must also manage the automated promotion of artifacts—compiled load modules and DBRMs—across environments. This eliminates the manual errors that plague traditional mainframe release nights.

The Technical Landmines That Cause 67% of Projects to Implode

Vendor proposals paint a rosy picture of mainframe DevOps integration. The reality is a minefield of technical traps. Knowing where these mines are buried is the difference between successful modernization and a career-limiting write-off. An industry analysis from Mordor Intelligence highlights the high stakes, but the real story is in the code. A staggering 67% of legacy integration projects fail, often because of something as obscure as decimal precision loss when shifting from COBOL to Java.

The COMP-3 Data Integrity Nightmare

The single most destructive pitfall is mishandling COMP-3 (Packed Decimal) data. Mainframe financial applications use this format to store numbers with absolute, non-negotiable precision. It packs two digits into a single byte, a structure with no direct counterpart in Java or Python. The naive approach is to map a COMP-3 field to a standard floating-point number (float or double). This is a fatal mistake. It introduces microscopic rounding errors that, when multiplied across millions of transactions, result in catastrophic accounting imbalances.

Any partner who cannot articulate their battle-tested strategy for handling COMP-3 without precision loss is an immediate red flag. Standard data types are a guaranteed failure. The only acceptable answer involves specialized libraries or custom logic that respects binary-coded decimal representation.

The Hidden Bombs in Character Conversion

Another classic project-killer is the conversion between EBCDIC and ASCII character sets. A standard conversion table works for the alphabet and numbers, but it’s a trap. Mainframe systems use custom code pages with proprietary special characters added decades ago. A generic EBCDIC-to-ASCII conversion turns these essential custom characters into garbage data. This silently corrupts customer names, addresses, and critical report fields. A successful project requires a meticulous discovery process to map every single character in your organization’s specific, non-standard code page.

Replicating Batch Dependencies and Recovery Logic

Mainframe batch processing isn’t just a list of tasks. It’s an interconnected web of dependencies managed by Job Control Language (JCL). A job might only run after five others succeed, and these jobs have sophisticated restart logic allowing them to resume from a point of failure. Trying to replicate this in a modern orchestrator like Jenkins or GitLab is a nightmare where teams consistently fail:

- Missing Dependencies: Failing to map the complete dependency graph, which causes jobs to run out of order and silently corrupt data.

- Ignoring Restart Logic: Building a pipeline that can only restart a failed multi-hour batch job from the beginning. This turns a five-minute hiccup into a day-long outage.

- VSAM Complexity: Trying to treat VSAM files like simple relational tables. Mishandling indexed (KSDS) and relative record (RRDS) structures leads to crippling performance bottlenecks.

These are the primary technical hurdles that cause mainframe DevOps projects to fail.

A Realistic Framework for Cost and ROI Analysis

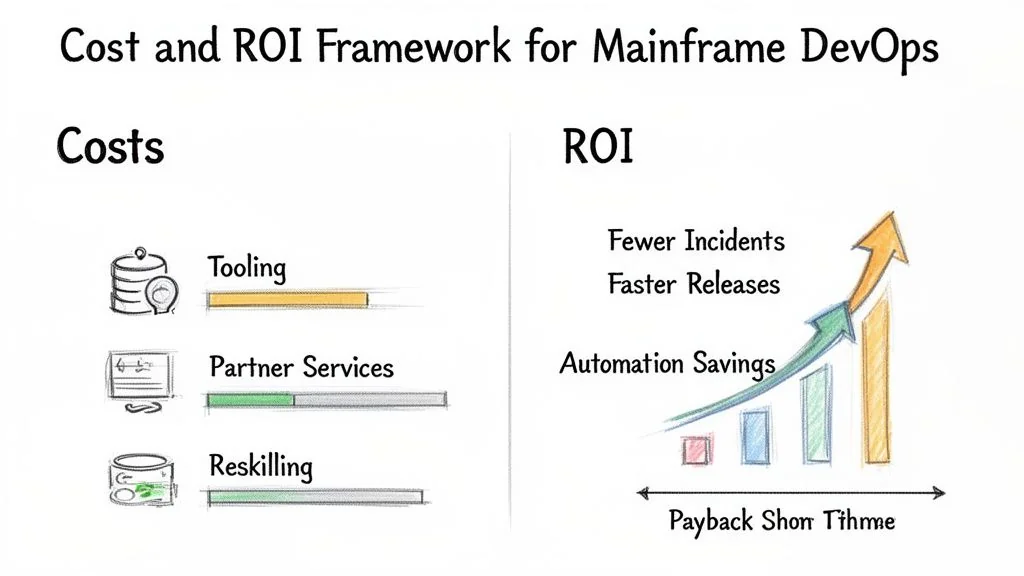

Building a business case for mainframe DevOps requires a financial framework grounded in hard numbers. The global mainframe modernization market is on track to hit $8.39 billion by 2025 and is expected to maintain a 9.7% CAGR through 2034, as detailed in this analysis from Data Insights Market. If your budget doesn’t meticulously account for the three core cost pillars—tooling, partner services, and internal resources—your project is at risk before it begins.

Deconstructing the Upfront Investment

The initial investment is almost always larger than executives anticipate. Here’s a realistic breakdown:

- Tooling Licenses: A major capital expense. Budget for annual licenses for a modern SCM like GitHub Enterprise or GitLab, a CI/CD orchestrator like Jenkins, and essential mainframe-specific tools like IBM Dependency Based Build (DBB) and a zUnit-compatible testing platform.

- Partner Services: Expert implementation partners are expensive; their fees can easily match or exceed tooling costs. Modernization services often range from $1.50 to $4.00 per line of code, depending on complexity. This covers initial pipeline setup, migrating history from legacy SCMs, and training.

- Internal Resources: The cost center most frequently underestimated. It includes the cost of reskilling your veteran mainframe staff in Git and modern CI/CD concepts, plus the potential budget needed to hire new talent with hybrid skills.

Moving from Vague ROI to Quantifiable Metrics

The return on this investment must be built on metrics you can track and prove.

The true value of mainframe DevOps integration isn’t just speed. It’s about reducing the high cost of manual errors and redirecting that saved engineering time toward innovation.

Anchor your ROI model in these three quantifiable areas:

- Reduction in Manual Deployment Effort: Calculate the person-hours your teams currently burn on manual builds, testing, and deployments. A successful pipeline automates at least 80% of these tasks, which translates directly into operational savings.

- Decrease in Production Incidents: Track the number of production incidents caused by manual deployment errors over the last 12-24 months. Quantify the business impact of each outage—lost revenue, SLA penalties. Automation all but eliminates this class of error.

- Acceleration of Feature Time-to-Market: Measure your current “concept-to-cash” cycle for any feature that touches mainframe code. Post-integration, this timeline must shrink. A reduction from a 90-day release cycle to a 14-day sprint cycle means you can ship business value six times faster.

Using this framework lets you project a realistic ROI timeline based on your organization’s real-world numbers. For a deeper dive, see our analysis of modernization costs.

How to Choose an Expert Partner, Not a Generalist

Choosing a partner for mainframe DevOps integration is a high-stakes decision with seven-figure consequences. The market is flooded with generalist IT consultancies that list “mainframe services” among a hundred other offerings—these firms are a liability. You need a partner with deep z/OS engineering in their DNA.

The wrong partner will try to sell you a generic DevOps template, burn months trying to force it onto your system, and stall the moment they hit a real mainframe roadblock like COMP-3 data or JCL libraries. A true specialist will ask you about these specific challenges in the first meeting because they’ve solved them dozens of times.

Vendor Evaluation Matrix for Mainframe DevOps Integration

Use this scoring matrix to move beyond sales pitches and compare potential partners based on what actually matters. This forces you to weigh criteria according to your specific needs and exposes a generalist’s weaknesses.

| Evaluation Criterion | Weighting (1-5) | Vendor A Score (1-10) | Vendor B Score (1-10) | Notes & Red Flags |

|---|---|---|---|---|

| Mainframe-Specific Tooling | 5 | Vague answers on DBB or Zowe? | ||

| z/OS & COBOL Engineering Depth | 5 | Are their “experts” just certified, or have they managed real systems? | ||

| Test Automation Strategy | 4 | Do they have a plan for batch test data? Ask how they handle COMP-3. | ||

| Enterprise Ops Automation (RPA/Zowe) | 4 | Do they think beyond the CI/CD pipeline to broader operational efficiency? | ||

| Client Upskilling & Training Model | 3 | Is their goal to empower your team or lock you into a managed service? | ||

| Proven Case Studies (Similar Scale) | 3 | Do their references match your MIPS and team size? | ||

| Pricing & Contract Transparency | 2 | Hidden T&M clauses? Vague SoW? |

Technical Proficiency with Mainframe-Specific Tooling

Your first filter should be their expertise with the tools that bridge the modern and mainframe worlds. Any vendor can claim experience with Jenkins or GitLab. The real test is proven ability to integrate the specialized toolchain. Ask for direct proof of experience with these:

- Build & Compile: Have they used tools like IBM Dependency Based Build (DBB) to orchestrate native COBOL and PL/I builds from a Git-based pipeline?

- Automated Testing: Can they show you a zUnit framework they’ve implemented for automated COBOL unit testing?

- Code Quality & Security: Have they integrated tools like Sonar for COBOL into a CI pipeline?

- Modern Mainframe Interfaces: What is their real-world experience level with Zowe for scripting mainframe actions and exposing services? A partner without hands-on Zowe experience is not a modern mainframe expert.

A generalist sells you a project and pushes for a long-term managed service contract that creates vendor lock-in. A real partner aims to build a capability, with a clear model for upskilling your developers. Their goal is to eventually make themselves redundant.

When to Say “No” to Integration and What to Do Instead

The smartest decision a CTO can make is sometimes to veto a full-scale mainframe DevOps integration. The blind pursuit of CI/CD for every system is a recipe for wasted millions. Pragmatic leaders know which battles aren’t worth fighting.

The Red Flags That Kill Your ROI

Pouring capital into a DevOps pipeline for an application that barely changes is a profound waste of budget. Check for these explicit red flags that signal a negative return on investment:

- Extremely Low Change Frequency: If a core banking application is modified only once every 2-3 years, the ROI of an automated pipeline is deeply negative. The cost of a few days of manual changes is a fraction of a multi-year integration project.

- Imminent Decommissioning: If the system is slated for retirement within the next 12-18 months, any investment in a CI/CD pipeline will become obsolete before it delivers any value.

- Absent Executive Sponsorship: A mainframe DevOps project is a significant cultural shock. Without an executive sponsor to break down organizational silos, the initiative is guaranteed to fail from resource starvation and institutional inertia.

In these situations, the only defensible decision is to consciously not invest. Documenting the rationale—low change velocity, impending retirement, or lack of sponsorship—protects your budget for projects that will drive business value.

The Pragmatic Alternative: Containment via APIs

Avoiding a full integration doesn’t mean living with the same bottlenecks. A more powerful and cost-effective alternative is a containment strategy. This treats the stable legacy core as a “black box” and wraps a robust API layer around it. Instead of automating the internal build process, you focus entirely on exposing its business logic through modern, well-documented REST APIs. This creates a critical buffer, decoupling your fast-moving front-end teams from the slow-moving back-end. It is a pragmatic compromise that delivers 80% of the agility benefits for 20% of the cost and disruption of a full-scale integration project.

Your Next Steps

This guide has laid out the strategic imperatives, technical frameworks, and financial realities of mainframe DevOps integration. Generic summaries are a waste of your time. Your next step is to use the provided frameworks to make a concrete decision.

- Run the ROI Analysis: Use the Cost and ROI Analysis Framework from this article. Plug in your own numbers for manual deployment hours and incident rates. If the projected ROI is negative or the payback period exceeds 24 months, immediately pivot to the Containment via APIs strategy.

- Evaluate Your Partner: If the ROI is positive, use the Vendor Evaluation Matrix to score your current or potential partners. If any vendor scores below a 7 on

Mainframe-Specific Toolingorz/OS & COBOL Engineering Depth, they are a generalist and pose a significant risk to your project. - Start the Migration: If you have a positive ROI and a qualified partner, your immediate priority is executing the Source Code Migration plan. This is the single biggest bottleneck and must be addressed before any other pipeline work begins.

Do not get stuck in endless analysis. The data and frameworks are here. The decision is yours.