Unclogging Legacy Database Performance: A CTO's Guide to System Transformation

Slow performance isn’t a quaint feature of your legacy system—it’s an active business liability. Those legacy database performance bottlenecks are draining revenue, killing innovation, and creating security holes that directly impact your P&L. This is a guide for turning a core infrastructure liability into a competitive asset through modernization.

Why Your Legacy Database Is Costing You More Than Speed

Slow queries are the symptom, not the disease. The real damage is measured in stalled projects, lost customers, and spiraling operational costs. When the database lags, the entire organization is held hostage. This guide moves past abstract discussions of technical debt to provide a decision framework for CTOs and engineering leaders tasked with fixing this critical infrastructure failure. We will dissect common bottlenecks and outline remediation paths, from tactical tuning to full-scale transformation.

The Real Business Impact

The consequences of inaction are brutal and extend far beyond the IT department. They show up as hard numbers on financial statements, in operational chaos, and as strategic dead ends.

Here’s where the bottlenecks are doing measurable harm:

- Stalled Innovation: Your team has a backlog of revenue-generating features—AI integrations, real-time analytics, new product lines. But the database can’t handle modern workloads, creating an innovation gridlock that your competitors are exploiting. A rigid schema that requires a six-month overhaul for a single new feature means your architecture now dictates your business strategy, not the other way around.

- Drained Revenue: Slow application performance is a conversion killer. A one-second delay triggers a 7% reduction in conversions. It’s a direct tax on your top line.

- Spiraling Maintenance Costs: The pool of engineers who can effectively triage and fix aging systems like Sybase or mainframe databases is shrinking and becoming prohibitively expensive. Budgets are consumed by emergency fixes instead of strategic modernization projects, with maintenance costs for some federal legacy systems exceeding $1 billion annually.

- Unacceptable Security Risks: Outdated databases are a goldmine for attackers. They lack support for modern encryption and fine-grained access controls, creating a massive attack surface that exposes you to breaches and crippling compliance fines. Non-compliance incidents in banking and healthcare can reach $10-20M per event.

The decision to modernize isn’t just technical; it’s a strategic imperative. Ignoring a legacy database is an active gamble where the cost of doing nothing will eventually—and inevitably—surpass the cost of migration.

This guide is a decision framework. We provide the specific metrics that signal you’re in real trouble and lay out the strategic trade-offs between tactical fixes and a full-scale migration. It’s a plan to reclaim your competitive edge.

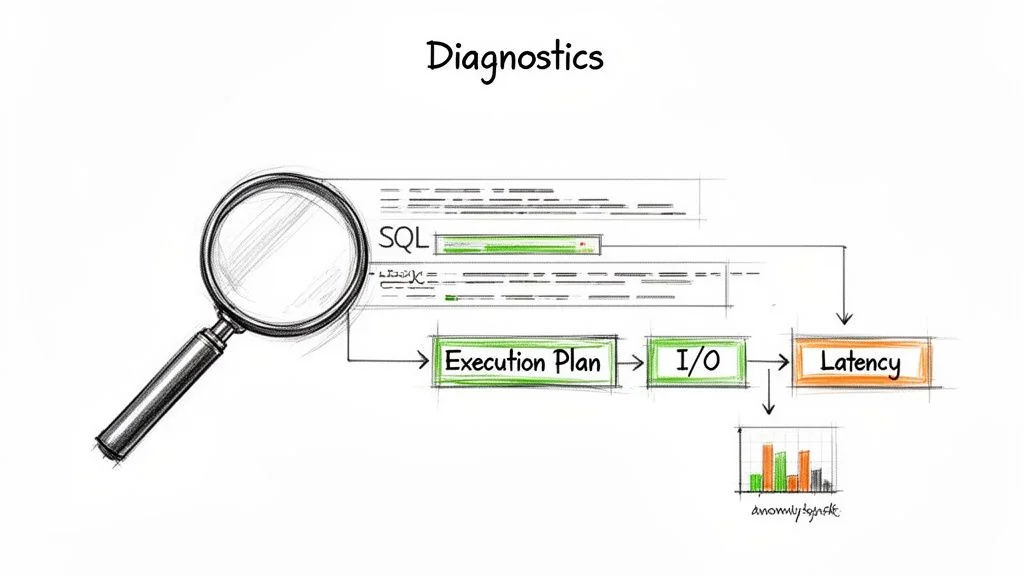

A Diagnostic Framework for Pinpointing Bottlenecks

Before fixing anything, you must find the real problem. The most common and expensive mistake teams make is throwing more hardware at an undiagnosed performance issue. This rarely solves anything; it just temporarily masks deep architectural flaws or grossly inefficient queries.

To move beyond guesswork, a systematic diagnostic framework is essential. This means ignoring misleading surface-level metrics like CPU utilization. A pegged CPU is almost always a symptom, not the root cause. The real culprits are buried in query execution plans, I/O wait times, and hidden lock contentions.

Starting with the Right Metrics

Effective diagnosis starts with collecting the right data—and knowing what to ignore. The metrics that truly matter depend on the database technology (a relational database behaves differently than a mainframe) and its specific workload. Your entire goal is to separate symptoms from causes. Get this wrong, and you’ll join the long list of misdiagnosed modernization projects that spiral over budget.

Here’s a practical guide for directing your engineering teams, mapping common bottlenecks to the specific metrics needed for a real diagnosis.

Common Legacy Database Bottlenecks and Key Diagnostic Metrics

| Bottleneck Type | Primary Symptom | Key Diagnostic Metrics | Diagnostic Tools |

|---|---|---|---|

| Inefficient Queries | Slow application response times, high CPU on the DB server. | Average Query Latency, P95/P99 Latency, Query Throughput. | Query execution plan analysis, SQL profilers, APM traces. |

| I/O Subsystem | Sluggish performance under load, even with low CPU usage. | Disk I/O Wait Times, Read/Write IOPS, Queue Depth. | iostat, perf, cloud provider storage metrics, wait statistics. |

| Concurrency & Locking | Application freezes, timeouts, unpredictable performance. | Lock Wait Times, Number of Deadlocks, Blocked Transaction Count. | Database-specific dynamic management views (e.g., sys.dm_os_waiting_tasks). |

| Index Issues | Formerly fast queries suddenly slow down drastically. | Index Fragmentation Level, Full Table Scan vs. Index Scan Ratio. | Index analysis scripts, execution plan viewers, database advisors. |

This table provides a starting point, but the real skill is in interpreting the data within the context of your business.

A critical mistake is treating all metrics equally. A 10% increase in CPU usage is background noise; a 10ms increase in average lock wait time for a core transaction table is a five-alarm fire. You must interpret data within the context of your application’s business-critical paths.

Advanced Diagnostic Techniques

Once you have your baseline metrics, it’s time to dig deeper. Standard monitoring dashboards give you the “what,” but you need more granular analysis to find the “why.” Pinpointing the exact cause of a legacy bottleneck requires moving beyond the high-level view.

Three techniques are indispensable for this work:

- Execution Plan Analysis: An execution plan is the database’s roadmap for running a query. Analyzing it is non-negotiable. It shows you whether the database is smartly using an index (Index Scan) or resorting to a brute-force, performance-killing Table Scan. A query that suddenly flips from one to the other is a classic culprit for system-wide slowdowns.

- Transaction Tracing: End-to-end tracing follows a single request from the application front end all the way to the database and back. This is how you prove whether the bottleneck is in your application code, the network, or the database itself. A slowdown might not even originate in the database but simply manifest there as a symptom of an upstream problem.

- Wait Statistics Analysis: This is the database’s own diagnostic journal. It reveals exactly what the database is spending its time waiting on. For example, high

PAGEIOLATCH_SHwaits in SQL Server tell you the system is waiting for data to be read from a slow disk into memory. It points you directly to an I/O bottleneck, eliminating guesswork.

After using these techniques to identify problem queries, mastering SQL query optimization techniques becomes the logical next step to directly address the inefficiencies you’ve uncovered. This evidence-based approach ensures you invest resources in solutions that solve the actual problem, not just its symptoms.

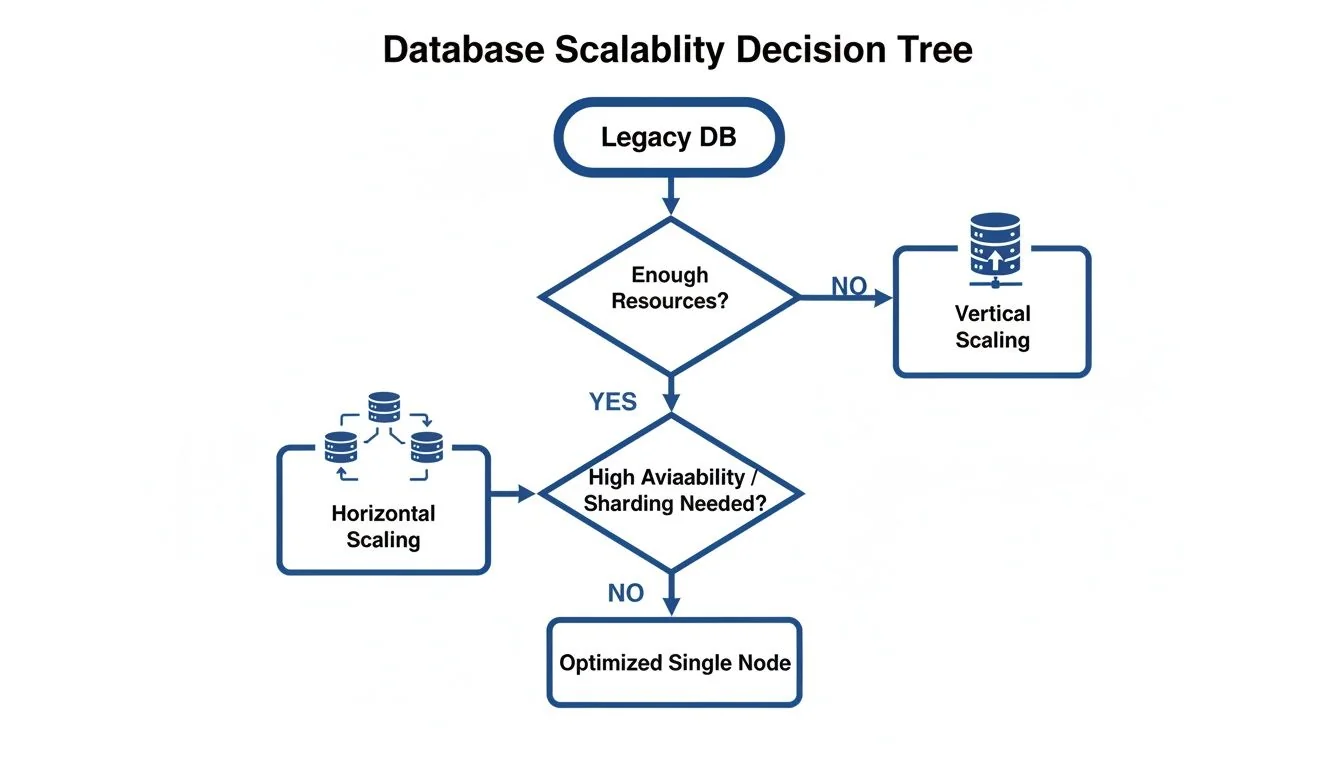

How Architectural Decay Cripples Scalability

Most legacy databases were engineered for a world that no longer exists—a world of predictable, monolithic workloads where the solution to every performance problem was simply to buy a bigger server. This fundamental design choice, vertical scaling, is the single biggest source of architectural decay crippling modern enterprises. These systems are fundamentally incompatible with the demands of microservices, cloud elasticity, and AI-driven analytics.

This outdated architecture eventually forces a decision it was never designed to make.

You can keep buying bigger, more expensive hardware until you hit a wall, or you can re-architect for horizontal scaling. Modern applications distribute their load across many commodity servers, a pattern most legacy databases simply cannot support.

The Vertical Scaling Trap

The problem starts at the very core of the monolithic design. All data lives in one tightly coupled schema, and every single query competes for the same finite CPU and memory resources. As data volume and user concurrency inevitably climb, this single point of contention becomes a catastrophic bottleneck.

For example, a major telecom provider’s billing system, built on a legacy relational database, ran without a hitch for years. But as they rolled out new streaming services and IoT plans, data ingestion rates exploded. During peak billing cycles, the database CPU would slam to 100% utilization, triggering cascading failures across their customer portals and payment systems. They had hit the vertical scaling wall, hard.

Rigid Schemas and Innovation Gridlock

Beyond the broken scaling model, outdated and brittle schemas are a primary driver of architectural decay. These schemas, often designed decades ago, have been twisted and contorted with years of patches and quick fixes. They are difficult to change, impossible to understand, and create a state of complete innovation gridlock.

A rigid schema doesn’t just slow down queries; it slows down the business. When adding a single new feature requires a six-month database overhaul project, you have lost the ability to compete. Your architecture is now dictating your business strategy.

This is precisely why 65% of organizations identify legacy platforms as their top blocker to cloud agility. They can’t adopt microservices because decoupling services requires a flexible, domain-oriented data model the monolithic database can’t provide. Failed “lift-and-shift” attempts cause infrastructure costs to balloon by 40% annually due to unmanaged compute.

Migrating a COBOL database averages $1.50-$4.00 per line of code, yet a staggering 67% of these projects fail due to ETL inefficiencies and schema redesign oversights. In contrast, expert partners who navigate these pitfalls routinely achieve success rates like 92% uptime post-migration. To understand more about these modernization challenges, you can explore the detailed analysis on enterprise growth. The inability to scale horizontally isn’t a minor problem; it’s the primary architectural failure that puts modern business initiatives on life support.

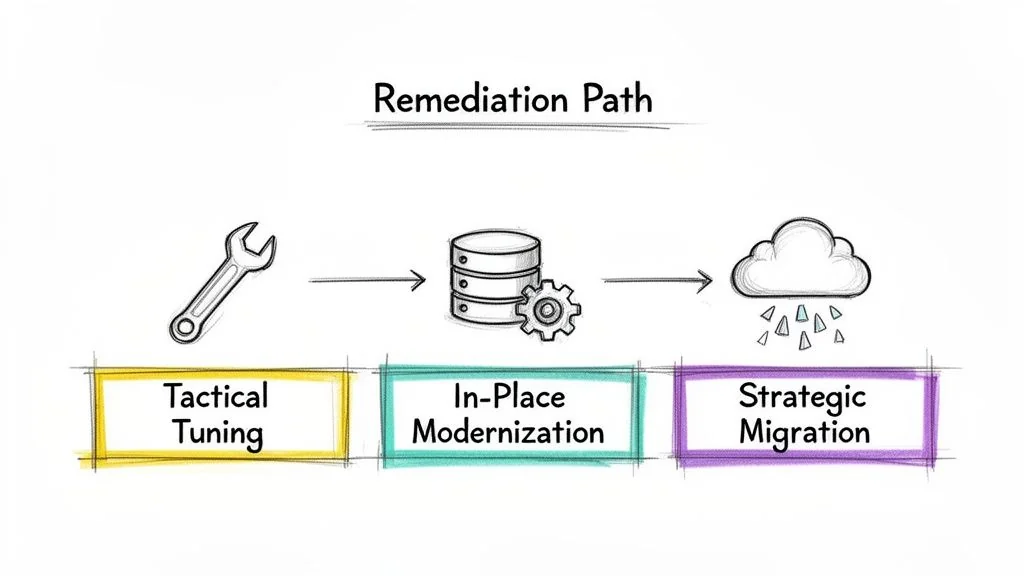

Remediation Paths: From Tactical Tuning to Full Transformation

Once you’ve diagnosed why your legacy database is slow, the real work begins. Deciding how to fix it isn’t a one-size-fits-all problem. The right move depends entirely on the business impact of the system, its expected lifespan, and where you want your architecture to be in three years. Jumping into a full migration when a simple index fix would have sufficed is a career-limiting mistake. So is applying band-aids to a system with fundamental architectural rot that’s holding the business back.

Tactical Tuning for Immediate Relief

When a bottleneck is isolated and the core architecture is still fundamentally sound, tactical tuning is your fastest path to a win. These are surgical, targeted fixes designed to stop the immediate bleeding without a major overhaul. They are perfect for systems with a limited remaining lifespan or for non-critical applications where a massive investment just can’t be justified.

These are the low-hanging fruit:

- Indexing Strategies: A correctly placed index can cut query times by over 10x by preventing the database from doing a slow, costly full table scan.

- Query Rewriting: Poorly written SQL is a shockingly common culprit. We’ve seen queries rewritten to use a more efficient join, cutting execution time from several minutes to under a second.

- Parameter Tuning: Legacy databases often run on decades-old default configurations. Adjusting parameters for memory allocation, parallelism, or query optimizer behavior can unlock significant performance gains.

These fixes are relatively low-risk and can often be deployed within a single sprint. But make no mistake: this is about managing symptoms, not curing the underlying disease of architectural decay.

In-Place Modernization to Extend System Life

What happens when tactical fixes aren’t enough, but a full-blown migration is too disruptive or expensive? In-place modernization is the pragmatic middle ground. This strategy involves augmenting the legacy system with modern components to extend its life and boost performance without replacing the core database itself.

Key patterns that work in the field include:

- Implementing a Caching Layer: Placing an in-memory cache like Redis in front of the database can dramatically reduce read load, offloading up to 80% of requests from the overburdened legacy system.

- Adding Read Replicas: For read-heavy workloads, creating one or more read-only copies of the database is a classic and effective pattern. This allows you to shuttle reporting and analytical queries to the replicas, preventing them from bogging down the primary database that’s handling critical transactions.

- Schema Refactoring: Carefully refactoring a problematic table or normalizing a denormalized structure can resolve deep-seated performance issues. This is more invasive than tactical tuning but an order of magnitude less risky than a full migration.

In-place modernization buys you time. It solves pressing performance problems and supports new business features, but it adds complexity to your architecture. Treat it as a strategic bridge, not a final destination.

Strategic Migration for Long-Term Health

When a legacy database suffers from fundamental architectural flaws—like an inability to scale horizontally or a schema that’s become a “big ball of mud”—no amount of tuning or caching will truly fix it. In these cases, a strategic migration is the only viable path forward. This means moving the data and application logic to a modern platform built for today’s scale and resilience demands.

These are major undertakings, but they solve the problem for good:

- Re-platforming to a Cloud-Native DB: This involves moving from a legacy on-premises system like Sybase or Oracle to a distributed SQL database like CockroachDB or a managed service like Amazon RDS. This directly addresses core scalability and resilience issues that are impossible to fix in the old architecture.

- Database Sharding: For massive datasets, sharding breaks a single, monolithic database into smaller, faster, and more manageable pieces called shards. This is how you achieve true horizontal scaling.

- The Strangler Fig Pattern: This is a risk-mitigation strategy where you gradually migrate functionality. You build new features on the modern database and slowly “strangle” the legacy system over time, redirecting traffic piece by piece until the old system can finally be retired.

A strategic migration is the most expensive and complex option, often taking 12-24 months to complete. But for a business-critical system, it’s the only way to eliminate legacy database performance bottlenecks permanently. A well-planned migration, grounded in solid data modernization principles, is what transforms a technical liability into a genuine strategic asset.

When Security Gaps Become Performance Killers

Performance isn’t just about query speed. In legacy databases, the most destructive bottlenecks often stem from security and compliance failures. An unpatched, unsupported database isn’t just slow—it’s an active liability, creating operational drag that is far more damaging than a missing index. These outdated systems were never built for modern security demands like granular role-based access controls (RBAC) or end-to-end data encryption.

The Real-World Impact of Security Debt

For companies in regulated industries like finance and healthcare, these gaps are catastrophic. A legacy database that can’t support encryption at rest and in transit, or produce detailed audit logs, is a compliance disaster waiting to happen.

This security debt shows up in a few predictable, painful ways:

- Massive Attack Surfaces: Years of ad-hoc patches, forgotten open ports, and unmonitored user accounts create a sprawling, undefended perimeter.

- Compliance Paralysis: Trying to meet regulations like GDPR or HIPAA on a system from a different era becomes a nightmare of manual workarounds. This slows down every process and creates a compliance posture so brittle it’s guaranteed to break.

- Eroding Data Trust: When data governance is a mess, nobody trusts the data. Bloated schemas full of redundant or “dark” data don’t just kill report performance; they make it impossible to guarantee accuracy, undermining the entire business intelligence function.

The data confirms this is a widespread crisis. Security and compliance bottlenecks in legacy databases now plague 80% of enterprises. Unpatched vulnerabilities from the 2010-2020 era are driving a projected 363% spike in SQL injection risks between 2022 and 2025. This isn’t a technical footnote; it has staggering financial consequences. In banking and healthcare, non-compliance fines can hit $10-20M per incident, with these outdated systems causing 40% higher breach rates. You can find more details on these database management trends in recent reports from Dataversity.net.

How Data Precision Failures Derail Modernization

The connection between security, compliance, and performance becomes painfully obvious during a database migration. One of the most common and costly failure points is the loss of data precision, especially when moving data from mainframe systems that use specific data types not native to modern databases.

A shocking 67% of database modernizations fail due to precision losses, such as mishandling COMP-3 decimals found in COBOL data paths. This single oversight can cost over $3M in rework.

This isn’t a simple rounding error. It’s a fundamental break in data integrity that can silently corrupt financial records or patient data for months. It proves that security isn’t a separate concern from performance—it’s a core component of a functional, trustworthy system. When you overlook these critical data precision requirements, you are engineering a performance and business failure.

Next Steps: A Decision Framework for Modernization

As a leader, your job isn’t to fix queries; it’s to make defensible decisions that drive the business forward. When a legacy database is slowing you down, you must frame the solution around business outcomes.

Justifying the Cost of Database Modernization to the Board

Stop talking about technical debt. The board cares about risk, revenue, and competitive threats. Build your case on these three pillars, using real numbers:

- Operational Risk Reduction: “Modernizing our database reduces our breach probability by 40% by enabling current encryption standards, helping us avoid a potential multi-million dollar fine under GDPR.”

- Customer Experience Improvement: “This modernization project will cut our page load times by three seconds. Based on industry benchmarks, that’s projected to boost conversions by 7%.”

- New Revenue Enablement: “This project enables our real-time analytics platform, which allows us to compete directly with our top three market rivals for the first time.”

Finally, use hard data on the rising costs of finding developers with outdated skills to show cost avoidance. This turns an expense request into a strategic investment.

Making the Call: Optimize vs. Migrate

Avoid over-investing in a system on its way out or under-investing in one critical to your survival. Use this decision matrix.

| Decision Criteria | Optimize the Legacy System | Execute a Strategic Migration |

|---|---|---|

| Business Impact | The system is non-critical with low revenue impact. | The system is business-critical and directly impacts revenue. |

| System Lifespan | The application has a planned EOL of less than 3 years. | The system needs to support the business for 5+ years. |

| Architectural Need | Performance issues can be solved with tactical tuning (indexing, query rewrites). | The system requires horizontal scalability, which the current architecture cannot support. |

| Integration Needs | The system requires minimal integration with modern cloud services or APIs. | The system must integrate with modern cloud services, APIs, or AI platforms to stay competitive. |

These strategic choices are complex. To explore them further, you can learn more about our approach to legacy system modernization. Understanding the broader landscape of digital transformation is invaluable for aligning your technology stack with your long-term business goals. Your next step is to use this framework to build an evidence-based business case, transforming the conversation from a technical problem to a strategic opportunity.